Mill managers know that they could vastly improve the performance of their production systems if only they could make meaning out of all the available data. In current mill environments, only a tiny fraction of that data is put to meaningful use. Using an Excel spreadsheet and other methods that might have worked in the past no longer work, given the amount of data generated by the equipment and the limitations on both the quantity and expertise of their personnel.

This blog, “How to be Smart with Sensors and Data,” explains how all parts of a data analysis system work together to collect and interpret the data and how they can vastly improve production performance.

The Pulmac industry 4.0 story

Since 1965, Pulmac has been in the business of providing data. We have established ourselves in the industry by providing unique instrumentation that addresses your pain points and supports troubleshooting.

As manufacturing continues to evolve into Industry 4.0, the volume of data available has grown dramatically. Pulmac continues to support the need to provide real-time insight for decision-making by offering online instrumentation in its portfolio. However, we also recognize challenges working with all this additional data.

How do we handle the increasing data load we are being presented with? What is the impact of adding more data?

Is a lot of effort needed for analog sensors?

In the simplest form, adding a traditional analog (4 to 20 milliamp) sensor to your control system is somewhat trivial. It involves mechanical work on a pipe to insert the sensor element, running signal wire through a conduit/cable tray to a field control panel, wiring to the designated spare terminal blocks, and configuration of the tag in the control system (range, description, IO address/slot, alarm limits, etc.), and lastly calibration. Having completed this scope, you can see a real-time signal in the engineering environment of your control system.

However, you are not nearly done. It is of no use if operators cannot see it. Placing the value on a process graphic is relatively simple, but care should be taken not to overload the operator. The more data you put on a screen, the harder it is to find the data that shows you a problem. This issue prompted the development of design standards, such as ISA 101, to help address proper HMI design to mitigate the burden of presenting a growing volume of data.

When configuring alarm limits and priorities can present another source of overburden. It is, unfortunately, common to configure alarms for everything because it is easy to do, and you would rather be safe than sorry. However, history has shown that this approach is unsafe, such as in the Three Mile Island accident. That is why the industry is adopting ISA 18.2 to ensure that alarms are functional and straightforward.

Then there is the historian. Presumably, the sensor you added is essential; therefore, having a history of its output can be helpful in troubleshooting and data analytics. However, adding tags to a historian impacts its license count resulting in cost escalation. Yet, not every data value needs to be historized. Even if added, it can be hard to find later unless organized in an asset tree structure. However, many have realized these efforts and tried to address them in planning to ensure accessibility and adoption.

The data from this sensor can impact your network bandwidth. Years ago, I was at a paper mill that overnight transformed from functioning to disabled with workstations that could not connect to controllers. We found that approximately 2,000 tags had been added to a data historian overnight. Without consideration of the impact, adding more data load to your network can have adverse consequences.

There is also a maintenance impact. There needs to be a database of instrumentation and records kept ensuring routine calibration is not forgotten.

With all this in mind, thus far, I have only addressed simple analog instrument needs. Additional considerations arise when smart transmitters are added.

More data, more problems?

The data definition files required to enable communications to need to be obtained and maintained. That is one of the justifications for an Asset Management System that can automatically detect sensors and update files required to make them work.

Smart Transmitters

It turns out that much of the data available from smart transmitters is not being used, which makes it questionable whether the investment was worth it. HART (Highway Addressable Remote Transducer) is an intelligent protocol that has been around for about 40 years. It could not be much easier to implement; it uses the same physical 4 to 20-milliamp wired connection as dumb analog sensors but superimposes a digital signal on top of it to get diagnostic, configuration, and more advanced measurements (like accumulators). Most control systems have configurable IO cards to access the HART data provided by the sensor. Yet, we often find in the industry the HART configuration is disabled or the data is not used. So when considering smart transmitters, there should be an idea of what to do with the data.

Wireless Sensors

With wireless sensors, these can generally be classified into two approaches. First, some control systems have wireless gateways that create a mesh network to bring sensors directly into their control system. These networks are compatible with the WirelessHart or ISA 101 protocol, as both are designed for industrial use. The sensor data is brought directly into the native control system environment, so tags are created like any other tag in the control system. Security concerns are addressed by the control system’s protocol and implementation. At this stage, this sensor data is within the control system and not accessible via internet connectivity. Of course, remote connection to a control system via the internet is commonplace, at which time this sensor data can be accessed.

The other approach to wireless sensors is using cell towers to connect sensors directly to the cloud. This can be a cost-effective and powerful opportunity for remote installations without the control system infrastructure. The ability to open a browser or app from your laptop or phone and see remote sensor data is fantastic. At the facility or enterprise level, this data can be added to a historian for inclusion in data analytics.

If all these considerations have been addressed, there is still a missing piece. You have real-time operational data, but beyond the transient signal shown on an HMI, the organization has no benefit unless it is utilized in data analytics or an automated solution.

However, wireless sensors still introduce some challenges for automation.

Analog vs. Wireless Sensors – Can they be trusted equally for automation?

Unlike wired analog sensors, there is no live zero. In other words, a simple analog sensor uses four milliamps (not zero milliamps) as its lowest range. It is easy to detect if someone cuts a wire or if there is another malfunction when the current drops below four milliamps. With a wireless transmitter, how do you know when it is dead? These sensors have batteries, which may be configured to report by exception. The power consumption to send a value is considerable and can consume a battery. Therefore, it is smart only to send a value when changes occur to reduce the maintenance burden. For many processes, a measurement may stay right where it is (within a noise band) for an extended period until there is a grade change. Yet, automation needs to know if it can rely on sensor input.

Even if the health of the wireless sensor is good, a dynamic control algorithm (like PID) is built for deterministic, periodic data. However, some modifications can be made to PID to accommodate non-periodic updates. This needs to be understood when applying wireless sensors for automation.

The case of a remote sensor directly connected to the cloud presents additional challenges for automation. First, if this sensor data is being used in an automated solution, it will have to go from the cloud down to a PLC or DCS controller to be used in logic that opens a door into the control system. Data is usually configured as a one-way connection from the control system to the cloud. A security risk is introduced when write access is granted going the other way.

Secondly, the internet is not deterministic. Therefore, relying on data from the cloud in an automated solution is risky. Even though the previously mentioned modification to PID can deal with non-periodic data, having unpredictable latency from internet communication make automation very challenging.

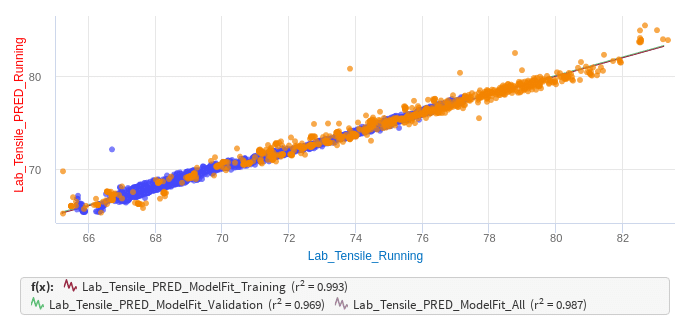

Pulmac understands this. We accompany our online measurements with automated solutions built to handle the challenges in sensor applications presented above. In addition, our DataSolve data analytics service turns your data into useful information for troubleshooting and optimization. Together, our offerings ensure that you capture the value of your investment.

We are in a digital world, and those that master their utilization of data will be the winners.

Recommended reading: Use case: Save $2MM/yr in Production Costs With PulpEye On-line Measurement

Pulmac has solutions that can help you be a master of your data.